Activate Framework: A skills kit for AI development in government

A question we’re constantly exploring at Ad Hoc is ‘how should teams adapt as technology and users’ expectations change?’ Right now, one of the biggest shifts is the rise of large language models (LLMs) and coding agents. These tools are quickly becoming part of how teams build and deliver digital services.

Today we’re open-sourcing Activate Framework, a starter kit that helps teams codify their preferred approaches to building products for government for use with tools like Anthropic Claude and GitHub Copilot. The Framework builds on Activate, our AI-enabled product-delivery approach that captures how we use AI to expand the impact of our teams working with government agencies.

Activate started as an opportunity to document the successful ways we work with agencies. We wanted to capture those practices clearly for ourselves, future teammates, and our customers. With the introduction of coding agents, that goal expanded to ensure the AI coding tools we use also apply those lessons in a consistent, reliable way.

Activate Framework makes those practices explicit and reusable. By open-sourcing it, we hope teams across federal, state, and local governments can adopt it, adapt it, and contribute improvements.

The challenge: Codifying ways of working

Most teams have their own ways of working: how they conduct code reviews, how they create and iterate README files, or how they format commit messages. These practices are either captured in internal documentation or passed down informally to new teammates as they onboard. With the introduction of AI coding agents, those practices now need to be captured so our tools follow those same ways of working.

When Anthropic introduced Skills, a new pattern emerged: extract proven practices into clearly defined, reusable files that agents can reliably discover and apply.

This idea has since been adopted and expanded throughout the tools ecosystem; for example, VS Code now offers a full set of customization primitives that let teams embed guidance directly into their repositories. Activate Framework uses these emerging standards to create a clear hierarchy of files that can be shared, versioned, and improved like code.

How it works and who it’s for

Activate Framework is designed to work especially well with GitHub Copilot, and it can be adapted for other AI coding tools with similar customization patterns.

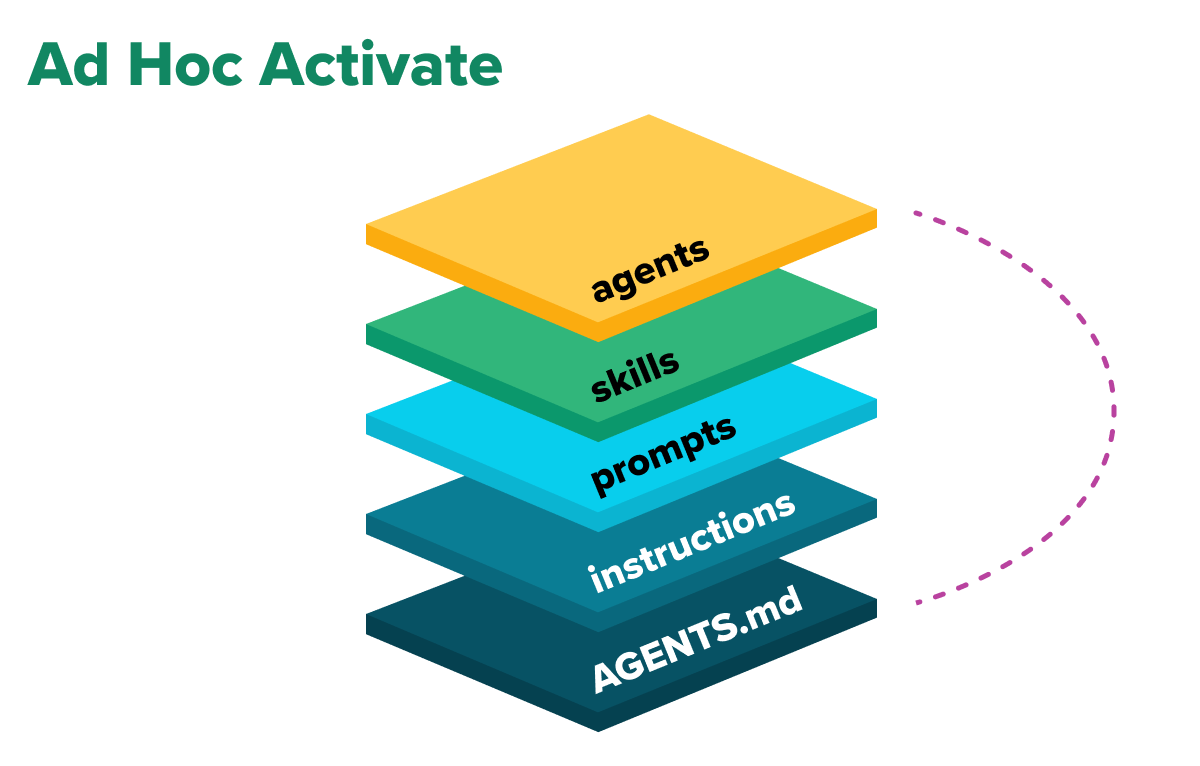

It’s a starter kit for repositories or individual users and teams and combines:

- An AGENTS.md template for always-on project guidance

- Scoped instruction files under .github/instructions/

- Reusable prompts under .github/prompts/

- Procedural skills under .github/skills/

- Optional specialized agents under .github/agents/

Within coding agents, these components act as a layered system: global conventions in AGENTS.md, contextual rules in instruction files, repeatable workflows in skills, and targeted behavior through specialized agents. This makes team practices explicit, versioned, and auditable.

Activate Framework is a strong fit for:

- Engineering teams standardizing AI-assisted development

- Technical leads reducing cross-repo inconsistency

- Platform and enablement teams creating reusable guidance

- Organizations scaling from pilot usage to broader adoption

One thing these groups all have in common is a desire to reliably guide coding agents’ outputs.

Why this matters for government

Government delivery carries expectations that most software teams don’t face: compliance requirements, accessibility standards, cross-vendor coordination, and the need to document how decisions were made. When AI tools participate in that delivery, teams need confidence that those tools are following the same rules as everyone else.

Activate Framework makes AI-assisted development auditable by design. Because guidance lives in version-controlled files alongside the code, teams can trace what rules an agent followed, when those rules changed, and who approved them. That traceability matters when work products are subject to review by contracting officers, agency stakeholders, or oversight bodies.

The framework also lowers the barrier for government teams exploring AI adoption. Rather than starting from scratch, teams can begin with a tested set of practices for code review, security, accessibility, and documentation, and then customize from there.

Real-world use case

Imagine an agency launching a new product that spans multiple delivery teams and repositories. Program leadership wants consistent engineering practices without forcing teams into inorganic workflows.

They start with a multi-team rollout model:

- Establish a shared baseline. A central repository publishes a common AGENTS.md and a small set of shared instruction files. Teams inherit that baseline and extend locally where needed.

- Address cross-team friction first. Initial guidance focuses on branching, commit format, traceability, and code review expectations – the conventions that create the most inconsistency across teams.

- Scale with reusable workflows. As teams stabilize, they add additional instructions, skills, and agents files for recurring tasks.

When teams discover a guidance gap, they create new customization files directly in VS Code. In Copilot Chat, they can ask for what they need in plain language:

- “Let’s work together to create an instruction file for our API error handling conventions.”

- “Draft a skill for our test-first feature workflow. We’ll work together to improve it.”

From there, teams work iteratively from their own code:

- Generate a first draft from working patterns.

- Keep each file tightly-scoped.

- Refine through real delivery work.

- Keep what improves outcomes and revise/remove what doesn’t.

This approach gives teams consistency where it matters and flexibility where needed, without starting from scratch.

Early lessons from adoption

Teams adopting Activate Framework patterns consistently report the same learning curve:

- Start small and focused.

- Start with an AGENTS.md before layering.

- Use instruction files for context-specific rules.

- Add skills for output-oriented actions, e.g., creating API documentation.

- Measure impact through real delivery outcomes (review quality, cycle time, onboarding clarity, user impact).

One of the hardest parts of adoption was measuring impact. We wanted to know whether Activate Framework improved team velocity and output quality, but those are often lagging signals influenced by many factors. The challenge was identifying leading indicators that show whether teams are moving in the right direction.

Our approach was to keep measurement simple: ask teams how they already define velocity and quality, then track those signals over time. For velocity, the metric was straightforward – how long it takes to move work from issue to PR to production, and whether that cycle time decreases after adopting Activate Framework. Quality was harder to measure directly, so we measured qualitatively, asking developers their impressions.

Our most promising results are when teams adapt Activate for their domains. For example, they customize a skill or instruction file based on the conventions and standards from their own codebases. From that point forward, changes to their code follow a prescribed pattern.

Another dimension we’re exploring centers around the balance between context management and how exhaustive Activate Framework should be. As we discovered in Humans required: Why AI coding still requires human experts to work most effectively, research suggests that while necessary, over-comprehensive skills and instruction files can hurt performance by introducing cognitive load and redundancy.

Activate Framework captures some of these core lessons learned for teams to build on and customize to their domain and needs. For example, combined teams from IronArch and Ad Hoc that deliver under our joint venture Peregrine Digital Services customized the Activate Framework to address the needs of those working on public facing digital services. Doing so has helped accelerate how AI capabilities are designed, evaluated, and deployed in support of our shared customer’s missions.

Getting started

Activate Framework is open source and available now at github.com/adhocteam/activate-framework.

Clone the repo, explore the starter files, and adapt them to your team’s ways of working. Contributions, feedback, and issues are welcome – we’d love to see how other teams put this to use.

Related posts

- How government platforms make AI coding assistants more effective

- The new Ad Hoc Government Digital Services Playbook

- Defining digital services and what they mean for product teams

- Becoming stewards of the social infrastructure

- Humans required: Why AI coding still requires human experts to work most effectively

- From PDF to platform: Using LLMs to transform authoritative copyright guidance into a digital-first experience